It’s 11 PM. You’ve narrowed the audience again. Swapped the bid strategy. Rebuilt the campaign structure for the third time this week. And CPL is still sitting at a stubborn $140. You feel like you’re shouting into a void, but the problem isn't who you’re shouting at, it’s what you’re saying.

The diagnosis is wrong. The targeting is fine. Meta’s algorithm has enough signal to find your audience - that’s not the constraint. The constraint is the creative. If the creative doesn’t stop the scroll, hold attention, and move someone to click, no amount of audience refinement will fix it.

CPL is a creative metric. Treating it as a targeting metric is the most common and most expensive mistake in B2B Meta advertising.

The fix is not a better audience. It’s a structured creative iteration framework that systematically tests, scores, and replaces underperforming creative until CPL moves. Here’s the framework.

THE CORE FRAMEWORK

To reduce CPL in Meta Ads:

(1) define one variable to test per creative (hook, angle, format, or offer)

(2) run each variant against a consistent audience for 7 days

(3) identify the lowest-CPL variant as the new control

(4) generate 3 - 5 new variants challenging the control

(5) repeat weekly. Each sprint’s winner becomes the new baseline - CPL declines as the control improves.

Why CPL Is a Creative Problem, Not a Targeting Problem

Meta’s algorithm in 2026 is good at finding people who match your targeting parameters. It is not good at making those people care about your ad. That is the creative’s job.

In Meta’s world, the algorithm is the pilot, but the creative is the fuel. You can have the best pilot in the world, but if your fuel is water, the plane stays on the tarmac. The 1.7- second scroll-stop is the only moment that matters.

If CPL is high, one of four creative problems is causing it:

- The hook isn’t stopping the scroll. The first visual or headline isn’t relevant or compelling enough to interrupt the feed.

- The angle isn’t resonating. The core positioning claim doesn’t match what your ICP actually cares about right now.

- The offer isn’t clear. What the prospect is being asked to do, and what they get for doing it - is ambiguous or unattractive.

- Creative fatigue. The same creative has been running long enough that your target audience has seen it multiple times and stopped responding.

Each of these is a testable hypothesis. The creative iteration framework is how you test them systematically.

The Three Enemies of CPL Reduction

1. Generic creative.

Generic creative is like a funhouse mirror: it shows a distorted, blurry version of your brand that no one recognizes. When you say 'we help B2B companies grow,' you aren't being clear; you're being invisible. Specificity is the only lens that brings your ICP’s pain into sharp focus.

2. No testing cadence.

Running the same creative for 6 weeks and expecting different results is not a strategy, it is hope. Without a defined testing cadence (new creative variants every 7-14 days), CPL will plateau as creative fatigues and the algorithm exhausts its ability to find new responsive audiences.

3. No iteration loop.

Testing without a structured review process produces data but not learning. The iteration loop is what turns performance data into the next brief. Without it, you’re running experiments without writing up the results, and repeating the same experiment next month.

The Creative Iteration Framework: Step by Step

- Define the control. Your current best-performing ad is the control. If you don’t have performance data yet, Sprint 1 establishes the baseline - run 3 - 5 variants simultaneously, same audience, identify the lowest-CPL as the Sprint 1 control.

- Identify one variable to test. Each sprint tests one creative variable: hook (the first 1.7 seconds), angle (the core positioning claim), format (static vs video vs carousel), or offer (what you’re asking them to do). Testing multiple variables simultaneously produces uninterpretable data.

- Produce 3 - 5 variants challenging the control. Each variant changes only the variable being tested. Same audience. Same offer (if testing hook). Same format (if testing angle). The only difference is the variable under test.

- Run for 7 days. Don’t optimize mid-flight. Let the algorithm complete its learning phase. Review at Day 7.

- Score and identify the winner. The lowest-CPL variant becomes the new control. The losing variants are documented in the Learning Log with a note on why they likely underperformed.

- Write the next hypothesis. Based on what the data showed, what should be tested next? If the hook test showed that pain-point hooks outperformed benefit-statement hooks, the next hypothesis might test two pain-point hook variants against each other.

- Repeat. Every 7 - 14 days. Indefinitely.

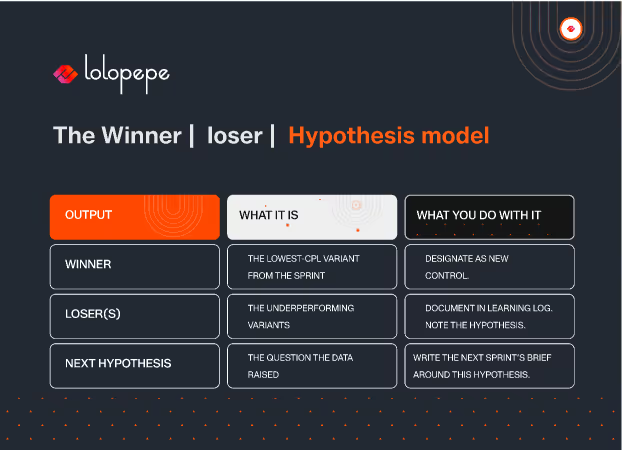

The Winner/Loser/Hypothesis Model

Every sprint produces three outputs, not one:

The Learning Log is the compounding asset of the iteration framework. After 6 sprints, you have a documented map of what works and what doesn’t for your specific ICP on your specific platform. After 12 sprints, that map is a significant competitive advantage - because your competitors are still guessing.

Testing Velocity: How Often and How Many

In performance marketing, perfection is the enemy of ROI. While you’re spending three weeks polishing one 'masterpiece' ad, your competitor has already run 15 'ugly' tests, found a winning hook, and cut their CPL in half. Velocity is about being faster than the rate of creative decay.

Weekly testing at 15 - 20 variants per month is the benchmark for top-performing B2B Meta accounts. At this velocity, you’re running enough tests to identify statistically significant patterns within 30 days - not 90.

The constraint on testing velocity is usually production capacity, not budget. Running 15 variants per month requires 15 assets per month - which requires a production system capable of delivering that volume reliably.

Creative Scoring Rubric: How to Evaluate Performance Objectively

Subjective creative evaluation (“I like it” / “it doesn’t feel right”) is the fastest way to destroy an iteration framework. Every creative decision must be tied to a measurable metric.

.avif)

Four metrics that matter for B2B Meta creative evaluation:

Hook rate (scroll-stop rate):

The percentage of people who stopped scrolling on your ad vs total impressions. Benchmark: 3 - 5% for cold audiences, 6 - 10% for warm. Low hook rate = the first frame or headline is not stopping the scroll.

Hold rate (3-second view rate for video):

The percentage of video viewers who watched past 3 seconds. Benchmark: 30 - 40%. Low hold rate = the hook stopped the scroll but the opening didn’t earn continued attention.

CTR (link click-through rate):

The percentage of people who clicked the ad. Benchmark: 0.8 - 1.5% for B2B cold traffic, 2 - 4% for warm retargeting. Low CTR with decent hook rate = the body copy or offer isn’t converting attention into action.

CPL (cost per lead):

The primary performance metric - but a lagging indicator. CPL is the output of all three preceding metrics. Diagnose using hook rate, hold rate, and CTR. Optimize CPL by fixing the metric that’s below benchmark.

Building a Creative Refresh Schedule

Every winning ad eventually hits a marketing plateau. The CPL stays flat for a while, then starts its slow, painful climb up the mountain. A refresh schedule isn't a 'nice-to-have' - it's the oxygen tank that allows you to keep climbing when the creative air gets thin.

Creative fatigue is an expiration date. Your audience has seen your ad so many times it’s become digital wallpaper. They don't hate it; they just don't see it anymore. The early warning signals:

- CPL increasing by 15 - 20% over consecutive days with no audience or bidding change

- Frequency exceeding 3.5 for cold audiences or 6.0 for warm retargeting audiences

- Hook rate declining week-over-week with no creative changes

A structured refresh schedule prevents fatigue-driven CPL spikes:

- Week 1 - 2: Launch new creative sprint. Run 3–5 variants.

- Week 2: Review performance data. Identify winner. Pause losers.

- Week 3: Scale winner. Brief next sprint based on Learning Log hypothesis.

- Week 4: Launch new sprint variants. Winner remains live as the control.

The winning creative from each sprint doesn’t get paused unless fatigue metrics trigger. It runs alongside new variants as the control. This means you always have a performing baseline while you’re testing hypotheses against it.

Frequently Asked Questions

Q: How many ad variants should I run simultaneously?

A: 3 - 5 variants per sprint is the optimal range for B2B Meta. Below 3, you don’t have enough data to identify patterns. Above 5, you fragment your budget enough that each variant reaches statistical significance too slowly. At $5,000 -10,000/month in ad spend, 3 -5 variants per sprint produces actionable data within 7 days.

Q: Should I test audiences or creative first?

A: Creative first, always. Audience testing produces incremental improvements in the range of 10 - 20% CPL reduction. Creative iteration produces improvements in the range of 30 - 60%. Maximize the creative variable first - find your winning angle, hook, and format. Then optimize audiences against your best creative. Running audience tests on weak creative produces unreliable data because the creative is a confounding variable.

Q: How do I know when a creative is truly fatigued vs just underperforming?

A: Fatigue has a specific data signature: declining performance on a creative that previously performed well, correlated with rising frequency. Underperformance looks different: consistently weak metrics from launch, not declining from a previous high. A creative that launched at $180 CPL and never improved is not fatigued - it was never a winner. A creative that launched at $85 CPL and has risen to $130 over three weeks with frequency above 4.0 is fatigued. The distinction matters because fatigue justifies retirement; underperformance justifies analysis of what the hook or angle got wrong.

Q: Can this framework work with a limited creative budget ($1,000–2,000/month in production)?

A: Yes - with one adjustment to production volume. At $1,000 - 2,000/month in production budget, target 6 - 8 variants per sprint rather than 15 - 20. The framework is identical - one variable tested per sprint, winner identified, Learning Log maintained, next hypothesis derived from data. The velocity is lower, which means CPL improvement takes longer (90 days rather than 30 - 45 days to see a reliable trend). The compounding benefit still applies - it just takes longer to accumulate.

The Bottom Line

The performance marketers consistently achieving the lowest CPL in B2B Meta advertising are not the ones with the most sophisticated targeting setup or the largest budgets. They are the ones running the most structured creative iteration process, testing the most hypotheses, learning from each test, and compounding that learning into an ever-improving creative baseline.

High CPL is not a budget problem. It is a creative problem with a process solution. The framework above provides that process. The only requirement is the discipline to run it consistently and the production capacity to generate the volume of variants that makes testing velocity possible.

Download the Creative Scoring Rubric - a one-page reference with benchmarks for hook rate, hold rate, CTR, and CPL by audience type and ad format.

Stop fighting the algorithm. Start a 7-Day Creative Sprint for $750 and let us engineer a creative baseline that actually moves the needle. Or, start with a free Creative Audit to see why your CPL is stuck..

Mirhayot builds design-led ventures that make impact. He specializes in turning subjective intuition into scalable Brand Operating Systems that empower Series A+ companies to ship daily.

Through his articles, Mirhayot shares the design thinking, strategic frameworks, and creative decisions behind building brands that look and feel like leaders. Whether it's brand systems, web design, or motion his insights are built from real work with real companies.

Subscribe to our newsletter.

Get valuable strategy, culture, and brand insights straight to your inbox.