We’ve all seen it: a campaign that’s been '90% ready' for two months. It’s trapped in a loop of endless copy tweaks, font debates, and 'one last look' from the CMO. While your team was arguing over the exact shade of button blue, your competitor shipped three versions, failed twice, and found the winning hook.

Perfectionism isn't a high standard - it’s an expensive delay.

THE CORE PRINCIPLE

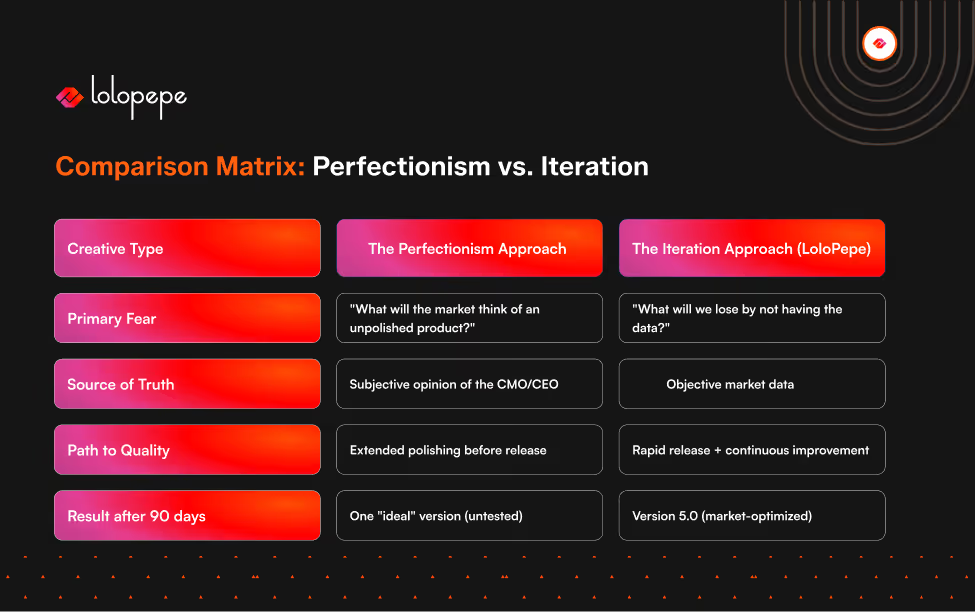

In B2B marketing, iteration consistently outperforms perfection. Version 1 creative is rarely the winning creative - but it is the essential baseline that generates the data needed to produce versions 2 through 5. The teams with the fastest learning loops, not the highest initial quality, produce the best long-term creative performance.

The Perfectionism Trap: Why It Happens in B2B

B2B perfectionism has a specific flavor. Unlike B2C, where campaigns are designed for broad audiences and high volume, B2B campaigns often reach small, high-value audiences - the argument being that every impression counts and a poor-quality ad reaching your 500-person target account list could 'damage' the relationship.

This reasoning sounds logical. It's largely wrong.

Impression permanence is a myth in B2B advertising. B2B marketers act as if every ad is a billboard in front of the prospect’s house that they have to look at for ten years. It isn’t. An ad is a handshake in a crowded room. If the handshake is a bit clumsy, they don’t hate you - they just keep walking. The real danger isn't a clumsy handshake; it’s never entering the room at all because you’re still outside polishing your shoes.

Perfection is relative without data. When a team debates two headline options for two weeks before launching, they're guessing - just with more deliberation. The only way to know which headline performs better is to test them. The two-week debate produced no data. Running both versions for two weeks produces actual insight.

The real cost of delayed launch is invisible. A campaign delayed by four weeks in a 90-day sprint cycle doesn't just lose four weeks of results - it loses four weeks of learning. At a testing cadence of one iteration per week, that's four creative variants that were never tested. If one of those variants would have cut CPL by 35%, the cost of those four weeks is measurable.

Every week of 'polishing' is a week of blindness. You aren't just losing time; you are losing the intelligence that only a live market can provide. In B2B, the most expensive creative is the one that's still in Figma.

The Data Case for Iteration

The CPL curve in systematic creative testing follows a consistent pattern. Version 1 establishes the baseline - it's the hypothesis, not the answer. Versions 2 and 3 were refined based on what the data showed: which hook had the highest stop rate, which CTA had the highest click-through, and which visual held attention past the 3-second mark.

Waiting for perfection before launching is like trying to cross a minefield by staring at it. You only find the safe path by taking the first step. Version 1 is your reconnaissance mission. It tells you where the 'mines' (bad hooks) are, so Versions 2 through 5 can reach the objective.

Version 5 doesn't win because your designers got smarter; it wins because the market acted as the grindstone.

Every click and every skip sanded down the rough edges of Version 1 until you were left with a polished diamond. You can’t polish a diamond that hasn't been mined yet. Ship the raw stone first.

VERSION 1 vs. VERSION 5 - THE TYPICAL PATTERN

V1 CPL (launch baseline): $145 V2 CPL (hook test): $128 - 12% reduction V3 CPL (visual format test): $108 - 16% reduction V4 CPL (CTA and offer test): $91 - 16% reduction V5 CPL (audience refinement): $82 - 10% reduction Cumulative improvement from V1 to V5: 43% CPL reduction Total time elapsed: 5 sprint cycles (10 weeks)

This pattern repeats across B2B campaigns in performance advertising. The first version is rarely the best version. It is always the necessary version - because without it, you have no data from which to improve.

The teams that produce V5 are the ones that shipped V1 without waiting for it to be perfect.

Creative Iteration vs. Creative Testing: The Distinction That Matters

These terms are often conflated. They're different functions.

Creative testing is running multiple variants simultaneously to identify the winning option. You produce Version A and Version B, run them in parallel, and credit the winner.

Creative iteration is using the results of each test to inform the next version. Version A beats Version B → the learning from A's performance shapes Version C → Version C is tested against Version D → and so on.

Testing without iteration produces a winner. Iteration produces a learning loop. The difference compounds over time: a team that tests but doesn't iterate will find their best creative within the initial option set. A team that iterates will keep improving past that ceiling because each sprint generates new inputs for the next hypothesis.

The Learning Loop is the mechanism that makes creative a compounding asset rather than a recurring cost.

The Rapid Learning Loop: How to Structure Iteration in Practice

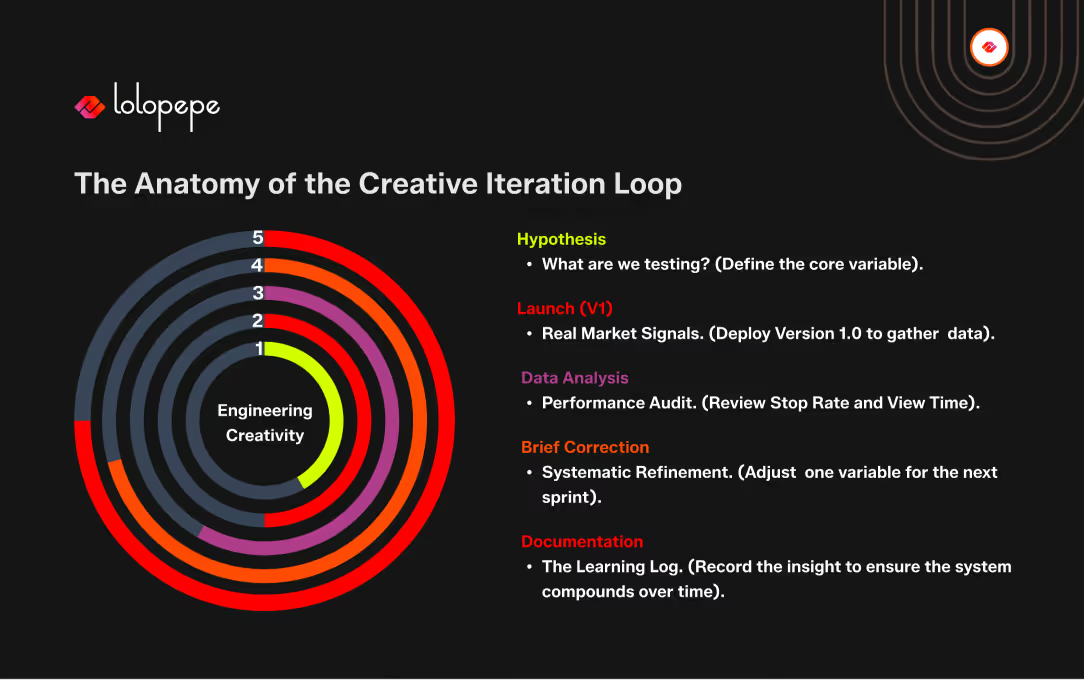

The Learning Loop is a structured process - not an informal 'we look at the numbers and decide what to do next.'

- Hypothesis (pre-launch) - Before producing the creative, document the specific hypothesis: 'We believe that leading with the CPL reduction outcome (rather than the process explanation) will produce a higher CTR for Type A ICPs because they are motivated by performance metrics, not methodology.' The hypothesis is the learning target.

- Launch (with clean tracking) - Deploy with UTM parameters and conversion tracking set up before launch, not retrofitted after. Every test needs clean data from Day 1.

- Data Review (Sprint End) - At the end of each sprint cycle, review: stop rate (was the hook effective?), CTR (was the value prop compelling?), conversion rate (was the CTA clear and the landing page aligned?). Identify the specific creative variable that most influenced each metric.

- Iteration Brief - Write the next brief based on the data findings, not on subjective reaction. If the hook had a low stop rate, the next brief changes the hook. If the hook performed but CTR was low, the next brief changes the body copy or visual. One variable changed per iteration - otherwise you can't isolate what improved.

- Document the Learning - Record hypothesis, test result, and conclusion in the Learning Log. This is the compounding asset. Six months of Learning Logs turn into a deep understanding of what works for your specific audience - an advantage that is genuinely difficult for a competitor to replicate.

What 'Good Enough to Ship' Actually Means

Iteration-over-perfection is not an argument for low standards. It's an argument for appropriate standards at the right stage of production.

The 'Good Enough' bar is simple: If this ad went live right now, would it embarrass us or just inform us? If it communicates the value clearly, it’s ready. Stop waiting for 'Impressive.' Aim for 'Clear.' Clarity generates data. Polish generates delays.

Version 1 does NOT need to be: The best possible execution of the concept. The most optimized headline. The final word on visual direction. 'Stunning' - it needs to be clear.

The test for 'good enough to ship' is simple: does this creative accurately represent our brand and offer, and will it generate the data we need to improve it? If yes - it ships.

If the team is debating whether it's 'impressive enough' or 'polished enough' - that's perfectionism language. Impressive and polished are post-iteration descriptors. Version 1 is designed to learn, not to impress.

Frequently Asked Questions

Q: Won't shipping a lower-quality Version 1 damage our brand?

A: Brand damage from a mediocre ad is not how B2B advertising works. An ad that doesn't resonate gets ignored - it doesn't generate negative brand associations. The brand risk in B2B advertising is not low quality. It's irrelevance - speaking to the wrong pain, with the wrong message, that was never tested because the team spent three months perfecting one version. That's the brand risk worth managing.

Q: How do we know when a creative is 'ready' to ship?

A: Use the four-part checklist: (1) On-brand - logo, colors, tone correct. (2) On-brief - addresses the correct audience, angle, and objective. (3) Technically compliant - platform specs, copy limits, file format confirmed. (4) One-idea clarity - communicates a single clear message without requiring effort to decode. If all four are met, it ships. If the team is still debating, they're not using this checklist - they're using subjective preference, which is perfectionism.

Q: What if we only get one impression with a high-value account?

A: The premise is the problem. B2B campaigns targeting high-value accounts are frequency-based - the goal is multiple impressions over a sustained period, not one perfect first impression. If the account sees your ad weekly for eight weeks, the quality of week one matters far less than the accumulated relevance of weeks two through eight - which improves through iteration. The single-impression assumption is how perfectionism justifies itself in account-based marketing contexts.

Q: Is this approach compatible with high-end brand positioning?

A: Yes - with one clarification. 'High-end brand positioning' refers to the standards for the final product, not those of the testing phase. Luxury brands still test. They just don't publish the test results. LoloPepe's version: the internal testing standard is 'good enough to learn from,' and the public-facing standard is determined by what the data shows works. The iteration happens. Only the best versions go wide.

The Bottom Line

The best B2B creative teams are not the ones with the highest standards on Day 1. They're the ones with the most disciplined learning loops from Day 1 to Day 90.

Version 1 is not your best work. It's your first hypothesis. The fastest path to your best work is through Version 1, not around it.

Ship. Measure. Iterate. Ship again. That's not a compromise on quality - it's the only reliable path to it.

LoloPepe's sprint model is built for iteration by default.

Every sprint ends with a data review. Every new brief starts with the learning from the last.

See the system in action - start a 7-Day Creative Sprint for $750.

Mirhayot builds design infrastructure for founders who have no time for fluff. He specializes in turning subjective intuition into scalable Brand Operating Systems that empower Series B+ companies to ship daily.

Through his articles, Mirhayot shares the design thinking, strategic frameworks, and creative decisions behind building brands that look and feel like leaders. Whether it's brand systems, web design, or motion his insights are built from real work with real companies.

Subscribe to our newsletter.

Get valuable strategy, culture, and brand insights straight to your inbox.